Artificial intelligence is reshaping industries at a remarkable pace, but this growth brings a new category of digital risk. AI hacking refers to the techniques used to exploit, manipulate, or deceive AI systems. From targeting machine learning models to injecting malicious prompts into large language models, the threat landscape continues to expand. Security professionals, developers, and everyday users all benefit from understanding these emerging vulnerabilities.

What Is AI Hacking and Why Does It Matter

AI hacking covers a range of methods that target the data, logic, and decision-making processes within AI systems. Unlike traditional cyberattacks that exploit software bugs, these techniques take advantage of how machine learning models learn and generalize. As organizations integrate AI into healthcare, finance, and critical infrastructure, the consequences of a successful attack grow significantly. Recognizing these threats is the foundation of any meaningful AI security strategy.

- AI models can be targeted through their inputs, training data, or deployment environment

- Threat actors exploit weaknesses in model architecture and surrounding infrastructure

- Attacks can cause biased outputs, unauthorized data access, or full system compromise

How Machine Learning Models Become Targets

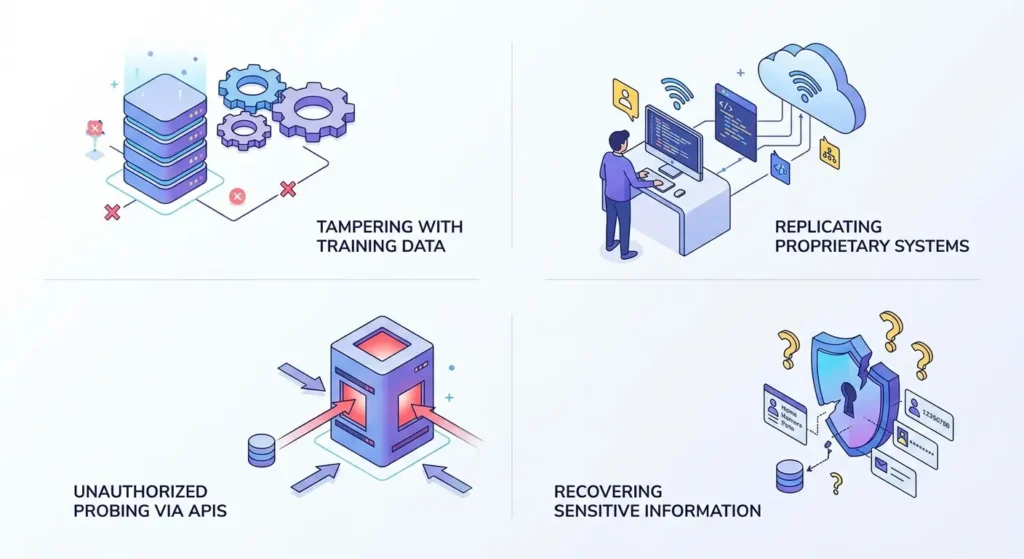

Machine learning models depend on large datasets for training, which creates exploitable attack surfaces. If training data is tampered with, the resulting model may produce harmful or inaccurate predictions. Attackers can also reconstruct model behavior by querying it repeatedly, a process called model extraction. As AI hacking methods grow more advanced, defenders must stay current on emerging threats.

- Model extraction allows adversaries to replicate proprietary systems without direct access

- Insecure APIs can expose machine learning models to unauthorized probing

- Inference attacks can sometimes recover sensitive information embedded in training data

Adversarial Attacks and How They Work

Adversarial attacks are among the most studied techniques within the broader field of AI hacking, designed to produce incorrect model outputs through carefully crafted inputs. A well-known example involves altering a small number of pixels in an image so that a computer vision system misclassifies it. These changes are often imperceptible to humans while reliably deceiving the target model. Adversarial robustness has since become a central focus in deep learning security research.

- Adversarial examples can target image, text, and audio-based AI models

- White-box attacks use full model access to generate precisely targeted perturbations

- Black-box attacks rely only on model outputs, requiring no internal access

Prompt Injection in Large Language Models

Prompt injection has emerged as one of the most studied vulnerabilities in AI hacking, particularly within large language model deployments. This technique embeds hidden instructions in user inputs to override or redirect a model’s intended behavior. Attackers can extract confidential system instructions, bypass safety filters, or generate harmful outputs using this method. OpenAI and other AI developers have confirmed prompt injection as a serious ongoing threat to deployed systems.

- Direct prompt injection targets input fields that users interact with directly

- Indirect prompt injection hides malicious instructions in external content the model reads

- OWASP lists prompt injection among the top vulnerabilities in large language model deployments

Understanding AI Hacking Through Model Poisoning

Model poisoning introduces corrupted data into a training pipeline before a model is finalized. This attack embeds hidden backdoor triggers that cause harmful behavior when a specific input is encountered during deployment. Detecting poisoned models is difficult because overall performance typically appears normal during standard testing. Strict data governance and anomaly detection throughout training are the primary defenses against this threat.

- Backdoor attacks are prevalent in federated learning environments with distributed data sources

- Poisoning can be introduced at the data collection, labeling, or aggregation stage

- Regular dataset audits are essential for identifying and removing corrupted training samples

Neural Network Exploits Explained

Neural networks power most modern AI applications, and their complexity creates inherent security challenges. Their behavior emerges from billions of learned parameters rather than explicit logic, making outcomes harder to predict and audit. Adversaries exploit this unpredictability to craft inputs that produce unintended results.

- Transfer learning models may carry vulnerabilities from their original pretraining datasets

- Overfitted models are susceptible to memorization-based inference attacks on sensitive data

- Interpretability tools help security researchers identify weak points within network architectures

Real-World Implications of AI-Powered Attacks

Attacks on AI systems are occurring across real deployments in finance, healthcare, and government. Threat actors use machine learning to automate phishing, generate convincing deepfakes, and defeat biometric authentication systems. AI hacking capabilities have been documented in nation-state cyber operations and organized criminal campaigns. The dual-use nature of AI technology means offensive and defensive tools frequently share the same underlying methods.

- AI-generated phishing messages outperform manually written versions in reported success rates

- Deepfake attacks enable impersonation that is difficult to detect without specialized verification tools

- Automated AI scanners can probe systems for vulnerabilities faster than any human security team

Ethical Hacking and AI Security Testing

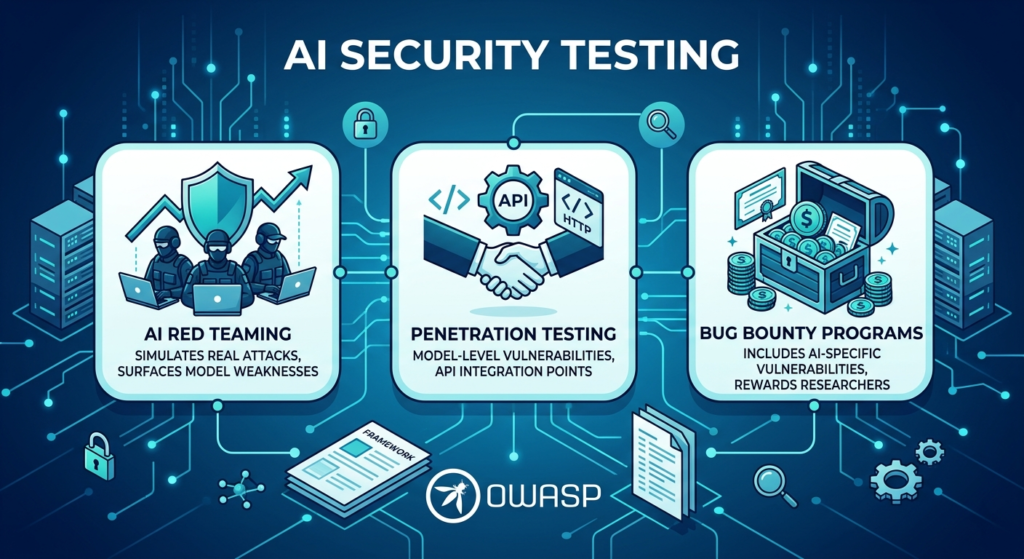

Ethical hacking in the AI context means authorized testing of machine learning models to expose weaknesses before adversaries do. Security researchers probe for adversarial vulnerabilities, data leakage risks, and logic errors using structured methodologies. OWASP has published frameworks specifically for testing large language model deployments in production environments. Red teaming AI systems has become a standard practice in responsible development programs.

- AI red teaming simulates real attack conditions to surface model weaknesses early

- Penetration testing covers model-level vulnerabilities and API integration security points

- Bug bounty programs have expanded to include AI-specific vulnerability categories

How to Defend Against AI-Based Threats

Effective defense against AI-based threats requires security measures embedded at every stage of the AI lifecycle. Input validation, output monitoring, and adversarial training are among the most effective countermeasures available. Access controls on model APIs and detailed interaction logs provide essential additional protection. Building security into the AI development process from the start is far more effective than addressing it after deployment.

- Adversarial training strengthens model robustness by exposing it to attack samples during development

- Differential privacy methods help protect sensitive information in training datasets

- Continuous output monitoring enables early detection of model compromise or behavioral drift

Conclusion

The field of AI hacking is growing in sophistication and real-world impact. As AI systems become embedded in critical infrastructure, financial services, and everyday communication platforms, securing them is a shared responsibility across developers, organizations, and regulators. A proactive security posture built on awareness and layered defenses is the most effective approach available today. Treating security as a core design requirement is essential for any AI system deployed at scale.

Frequently Asked Questions

What is AI hacking in simple terms?

AI hacking is the practice of exploiting vulnerabilities in artificial intelligence systems to manipulate their behavior or extract sensitive data. It covers techniques including adversarial attacks, prompt injection, and model poisoning, and applies across machine learning models, neural networks, and large language model applications in real-world use.

How does AI hacking differ from traditional cyberattacks?

Traditional cyberattacks target software flaws, network weaknesses, or human behavior. Attacks on AI systems focus on data integrity, model weights, and decision logic, requiring knowledge that combines cybersecurity and machine learning. This distinction makes it a rapidly growing and specialized area within the broader security field.

Can AI-based attacks affect everyday users?

Yes. Compromised recommendation systems, manipulated content filters, and deepfake-based fraud directly affect individuals who use AI-powered applications. When an AI system is attacked, the consequences often reach everyday users who have no visibility into the underlying model or its vulnerabilities.

What can organizations do to reduce AI security risks?

Organizations should adopt adversarial training, enforce data privacy frameworks, and conduct regular audits of their machine learning pipelines. Following guidance from bodies such as OWASP and integrating AI security into the full development lifecycle are foundational steps for reducing exposure to AI hacking risks.